How could data centers affect food production?

Looking at the impact of data centers, with hard data and lots of charts

Plans to build data centers have been rejected all over the country and over my feed, from New Brunswick, NJ to San Marcos TX, to Coweta, OK.

Being honest, I don’t know how to feel just yet, but its obvious there are two sides of the battle: the techies, who believe that ai is a net good thing we should invest in, and everyone else, who, understandably, don’t welcome the incoming pollution and designation to ‘permanent’ underclass.

So, I’m gonna break down as much of the numbers as I can to help make sense of this thing.

Possibility #1: Electricity

What are data centers?

For most things on the internet to work, including AI, computers need to send requests to a computer server somewhere to run the calculations. Data centers are just massive buildings that hold a bunch of computer servers.

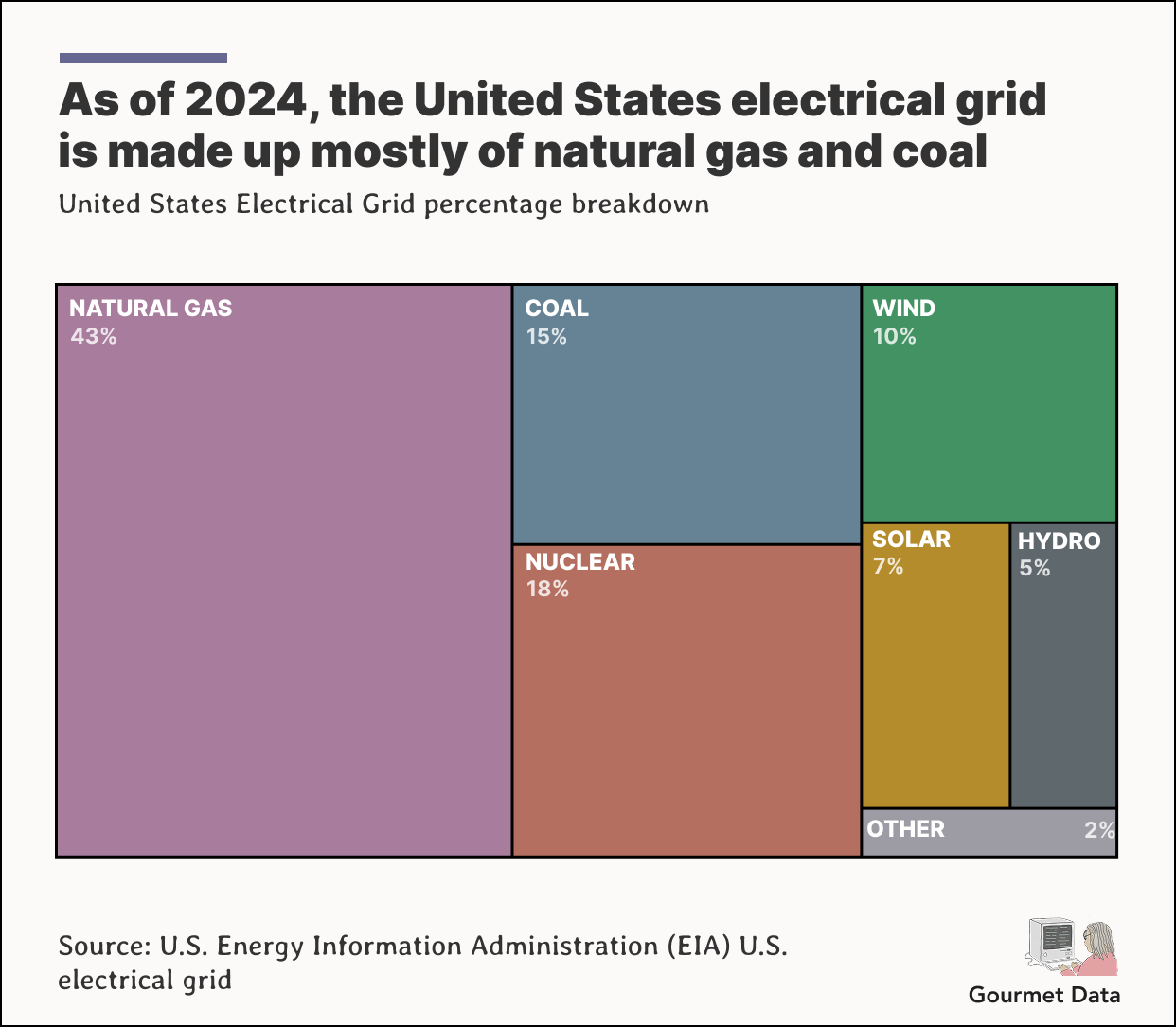

Because data centers are a large continuous load, they run on grid electricity, and that is much of the big debates surrounding them. Currently, the US grid is powered by a few different sources, from solar to nuclear to natural gas. Which comes the first concern; to power these centers, we will probably need to rely on ‘dirty energy’.

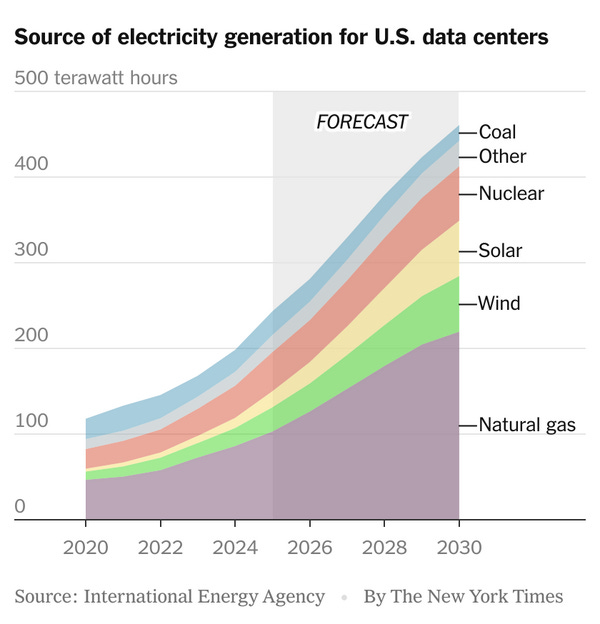

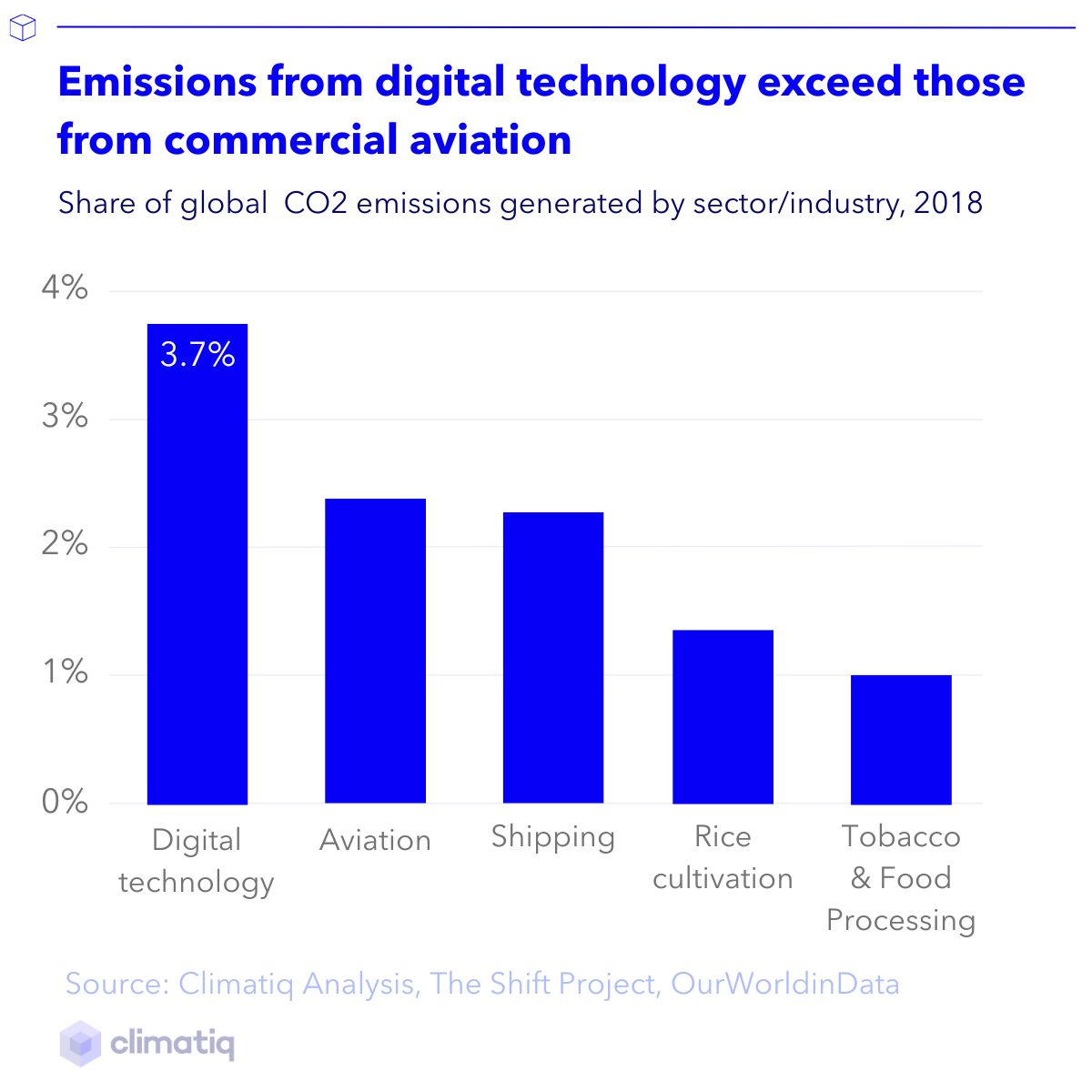

Now, compared to agriculture, which is responsible for 1/4 of the world’s greenhouse gas emissions, data centers are responsible for somewhere between 0.5 - 4% of carbon emissions. And, there’s only so much we can improve our renewable energy capabilities in the next few years, especially since the New York Times is forecasting that the demand for electricity for generations willl double in the next few years.

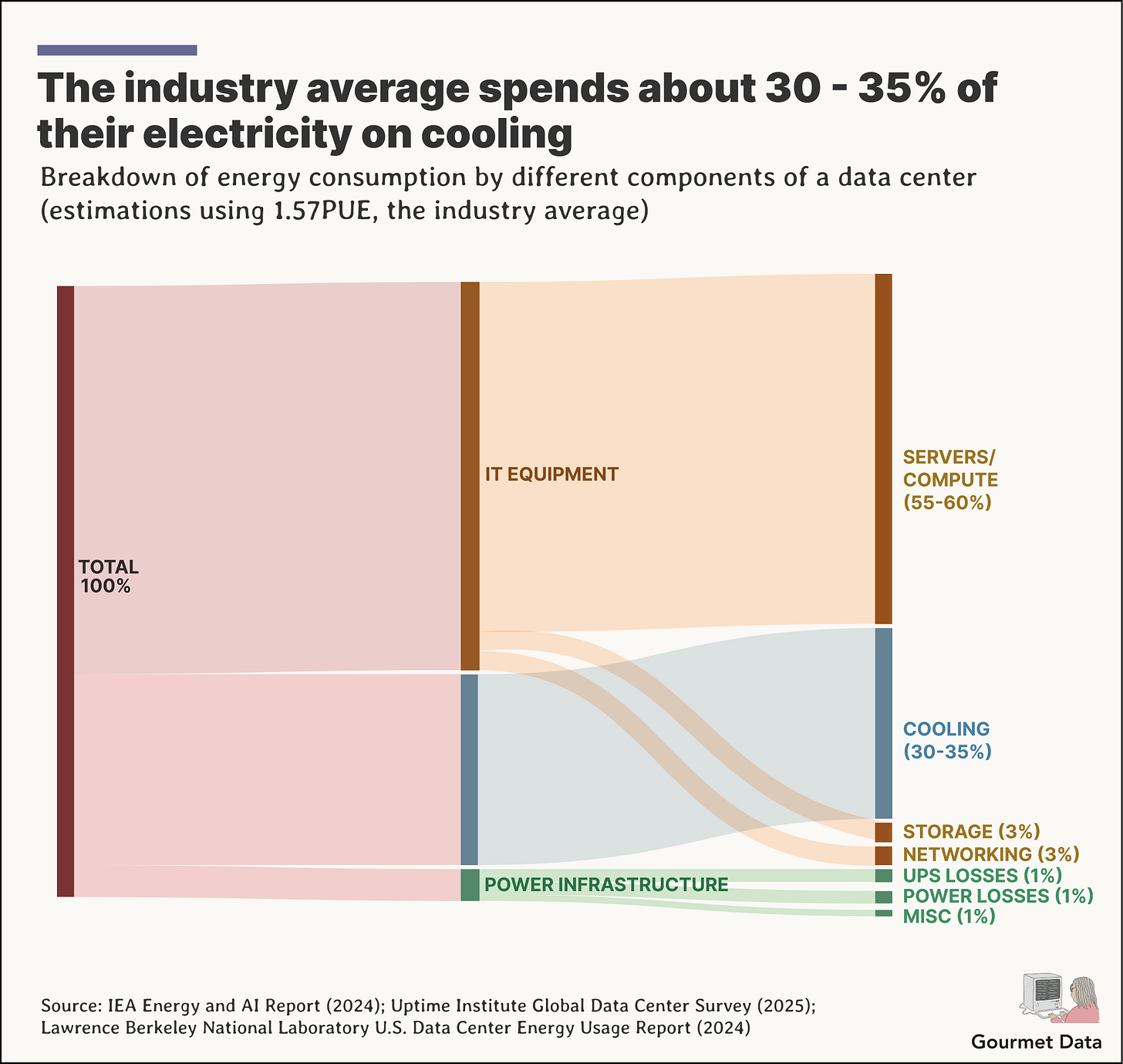

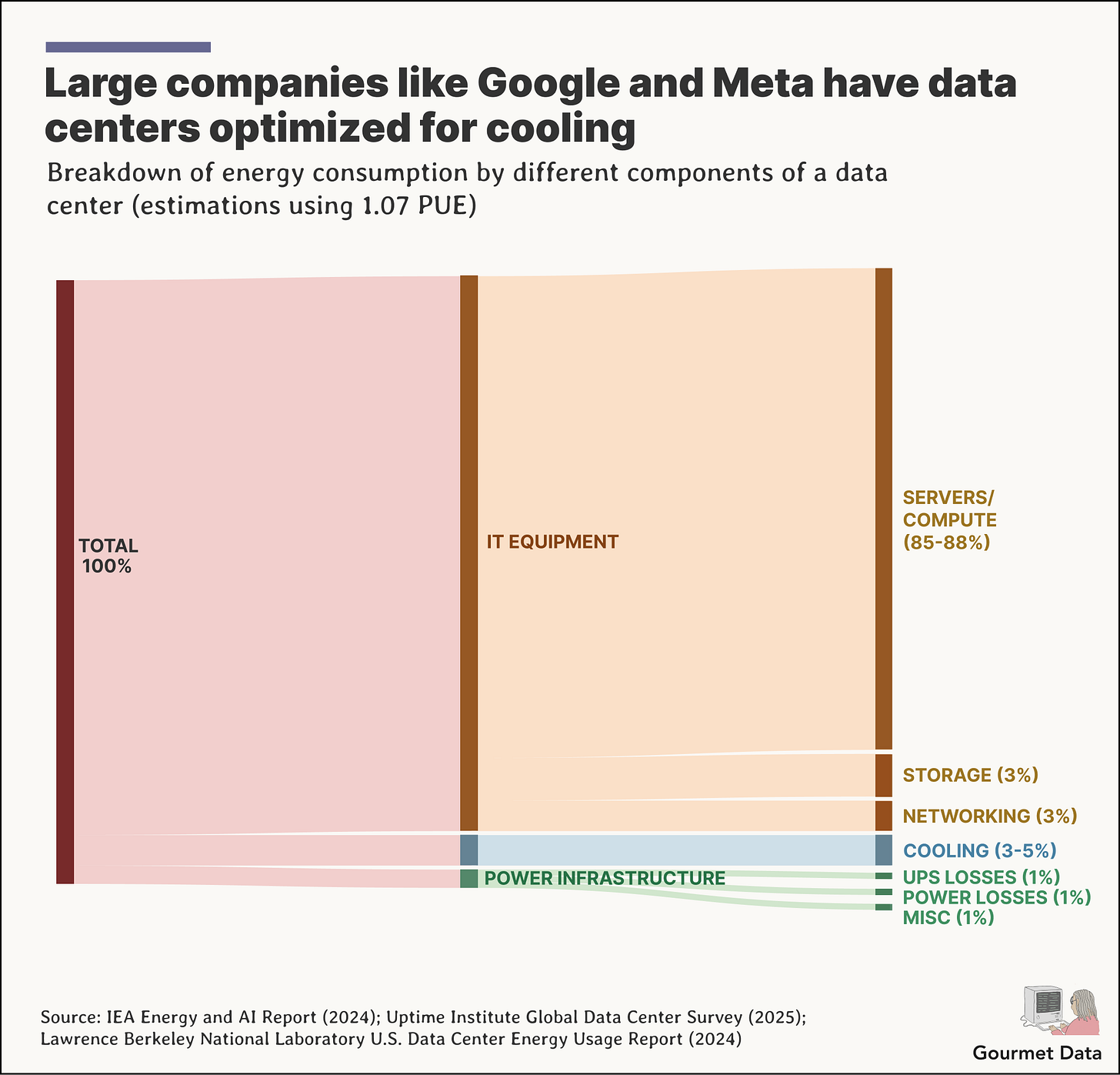

Why does a data center need so much electricity?

There are three main reasons data centers need electricity: cooling, server and IT equipment, and power distribution infrastructure (lighting, UPS systems, etc.). The largest shares go to IT equipment and cooling, but everything depends on something called the PUE, which is basically the total facility power ÷ IT equipment power.

It changes from data center to data center, and the industry average has a PUE of 1.56, which would mean that for every 1 watt going to IT, 0.56 watts go to overhead (cooling, power distribution, etc).

Let’s do some math.

Most typical enterprise data centers consume somewhere in the range of 120-240 megawatt-hours (MWh) per day (equivalent to drawing 5–10 megawatts continuously).

If the average U.S. home uses about 863 kWh/month, a regular data center, at 5 MW, would use the same amount of electricity as over 4,000 homes.

Hyper-scale facilities can sit closer to the 100 MW range, reaching a 100 MW continuous draw; 100 MW an hour for 24 hours a day equals ≈ 72 million kWh/month, or about ~83,000 homes’ worth of monthly electricity. Yikes.

As I said before, each data center has their own PUE, and some companies with resources have gotten better at allocating more power to compute rather than cooling. Rod McLaren created a neat little list on his blog.

How much electricity does AI use, specifically?

The recent increase in data server attention is specifically because of AI, so let’s do some calculations with that in mind.

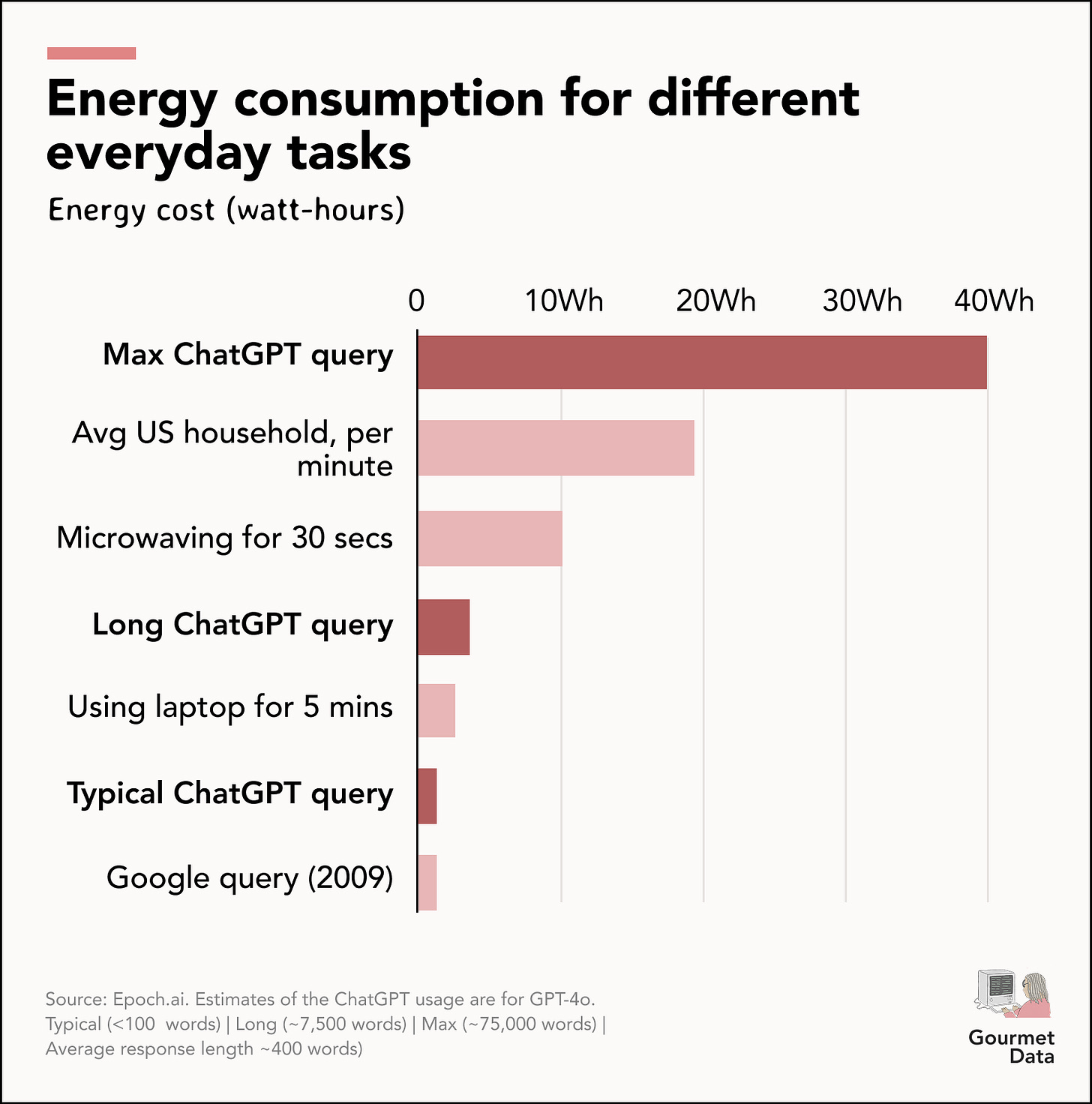

The data is murky at best, because its often self-reported. There was an old Google claim (from 2009) that each search would cost about 0.3 Wh. And Sam Altman says chatgpt costs 0.34Wh per query. Though, if I were to guess, Google’s energy usage has probably decreased by quite a lot since 2009).

Epoch.AI created this graph that breaks it down smartly, and they actually expand on how they got to those numbers here and in this spreadsheet.

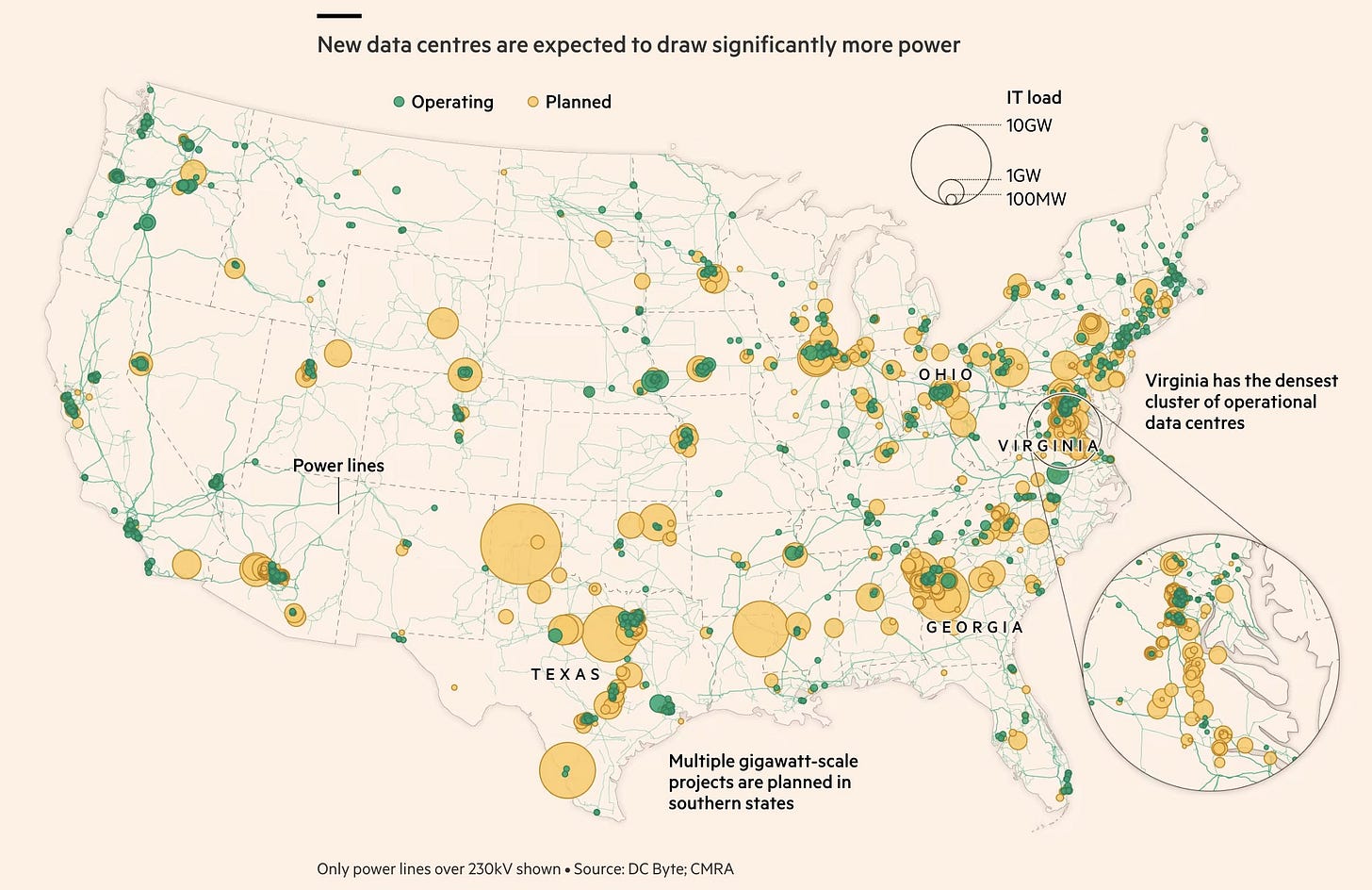

Possibility #2: Land Use

There have been proposals to have data centers in both rural areas, due to cheaper land, and more urban areas, due to proximity to people and shorter bandwidth distances. This map by the Financial Times does a decent job of showing the current landscape for data centers, both current and future.

For land, there are already documented tensions in places like Virginia and the midwest, where data centers are competing with land that could be used for farmland. Landowners may also be impacted by 24/7 lighting and noise from the facility.

If you want to read more, this piece talks more about data center land use.

Possibility #3: Water

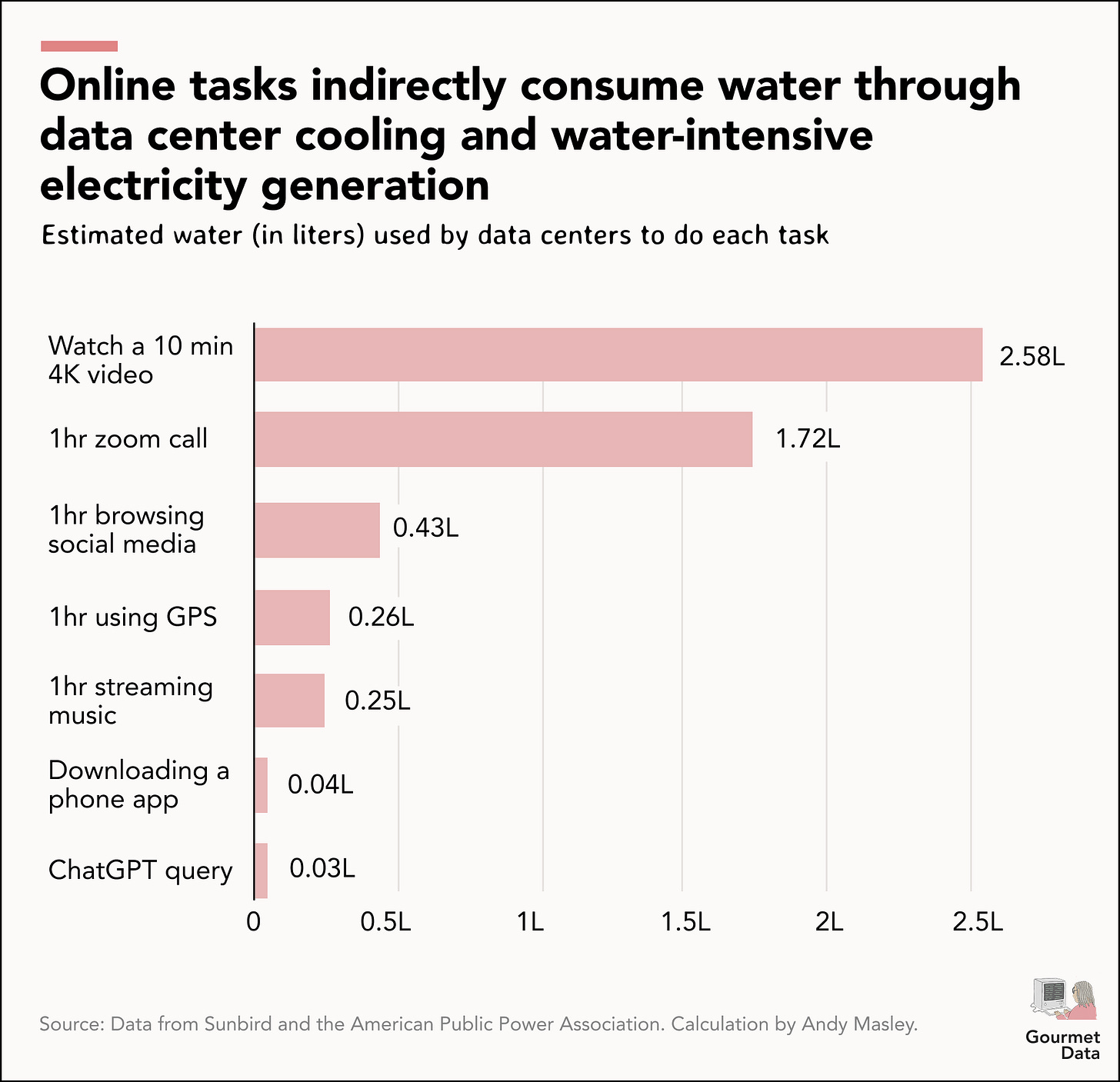

Something else we need for data centers is water, for cooling and for other reasons. Computers generate a bunch of heat, and often times, need water to cool them down, as well as contribute to other power generating tasks. One widely cited “average” is around ~1.9 liters per kWh.

A typical enterprise data center drawing 5 MW would use about 120 MWh a day, or 3,600 MWh/month. At 1.9 liters/kWh, that’s roughly 6.5 million liters of water per month, or about 2.5 Olympic swimming pools of water a month. In a world where we already have a need to worry about water scarcity, its understandable why AI isn’t as popular with the environmentalists.

Interestingly enough, the data shows that it’s not reserved only for AI queries. Compartively, AI queries are less of a strain on water than watching Youtube videos.

Moreso, the problem is with the electricity generated, as in America, anything that you do that uses electricity often also uses water. Up to 73% of U.S. power plants (coal, natural gas, and nuclear) generate electricity by boiling water to create steam, which spins turbines. That process requires enormous amounts of water for cooling.

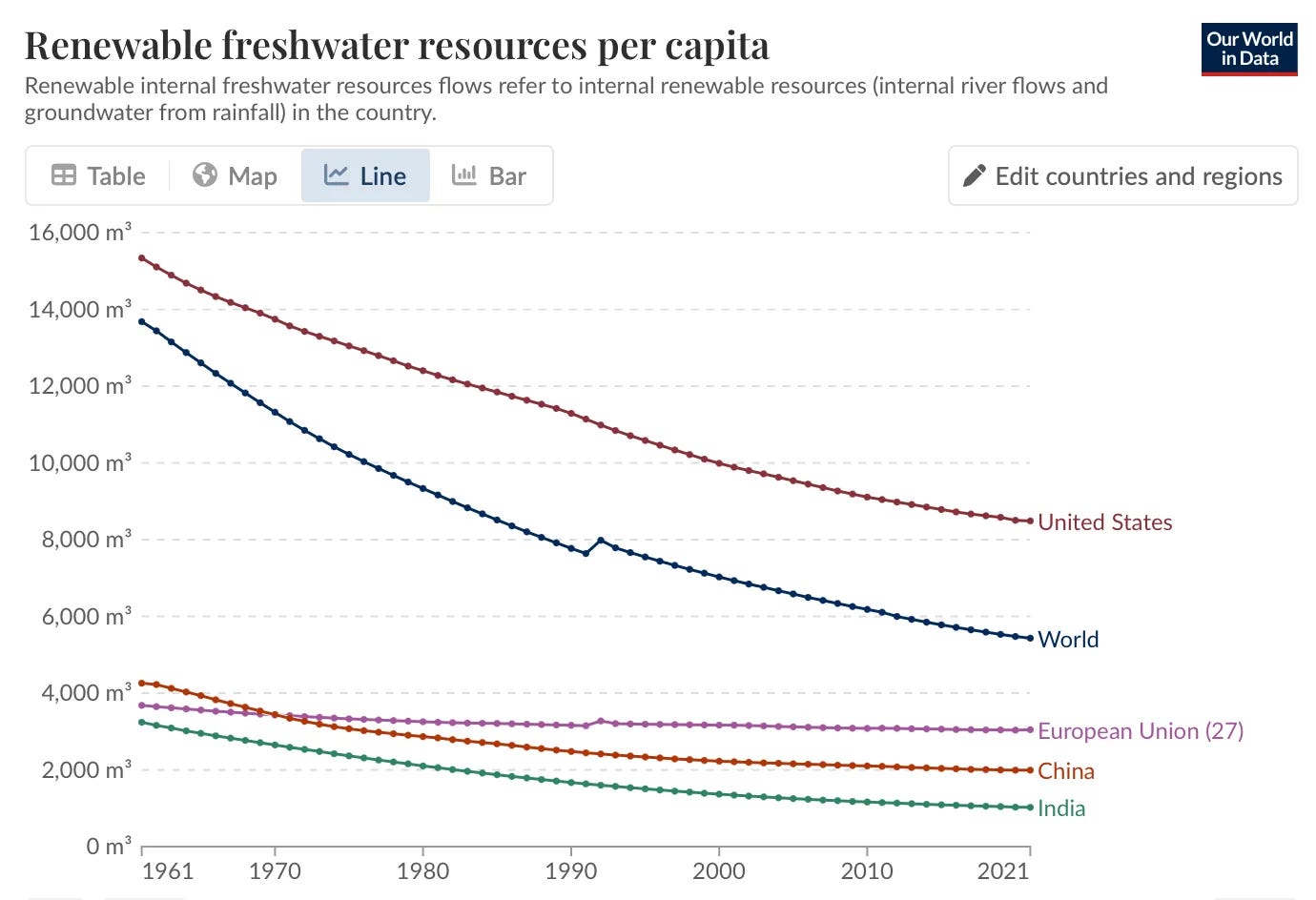

What also goes unacknowledged is the systems perspective, and the understanding that when you take water from a place, you have to reduce demand or put water back in that same place. Roughly two-thirds of the data centers built since 2022 have been located in water-stressed regions, according to a Bloomberg News article (that’s been debated), and agriculture, the biggest drainage of water in our country, must reckon with that.

Data centers, not AI, is the real strain on our water, and it will inevitable compete against agriculture for freshwater sources. Technically, salt water can be used for cooling, but the corrosive properties of salt mean that companies prefer to pay for freshwater. And we’re already on the decline for that.

Possibility #4: Pollution

Lastly, there is the pollution that stems from data centers, more specifically, from the diesel generators. Now, I have to admit that this threat seems less of a concern because the diesel generators are mostly used for backup; data centers 99% of the time will be powered the whatever US grid they’re on. Still, when they are used, they are awful.

There have already been reports of health consequences because of being close to data centers. Data center generator pollution can rival power plant emissions in some scenarios. Not to mention, these data centers are construction projects. And, as stated in this NYTimes piece, its possible for these projects to pollute groundwater.

Something that might be a ‘positive’ pollutant (oxymoron, i know) is heat output. These data centers computers run extremely hot, and data centers emit significant waste heat into the surrounding environment. Some farmers have been capturing that energy and repurposing it for their farms.

There’s emerging research on localized temperature increases near large facilities, which could affect microclimates relevant to nearby farming, as well as things like noise that may also disrupt farm-life. Outside of that, the carbon emissions from data centers, and the technology industry as a whole, is quite small compared to other industries. Agriculture is about 11% (we need food so I’ll allot it).

For comparison, agriculture is responsible for about 11% of global carbon emissions.